Let's Discuss

Enquire NowThe significance of making the web accessible to anyone and everyone cannot be highlighted better than the creator of the World Wide Web himself:

“The power of the Web is in its universality. Access by everyone regardless of disability is an essential aspect”

This article will look at the state of web accessibility now and how we can leverage the power of existing technologies to improve accessibility for those using assistive technologies. Accessibility can affect anyone at any time in their lives, and disabilities are in fact really more common than one might think. For instance, a sports injury such as an injured wrist can be a sort of disability and can bring with it accessibility issues, because the person may find it hard to use a computer mouse.

What is Accessibility?

“Accessibility” makes sure people with disabilities can access the same information and benefits from a system as everybody else. “Usability” is a quality attribute that evaluates how easy a user interface (UI) is to use.

“Usability depends on Accessibility”, according to DigitalGov. So for the betterment of a product, it’s important to have a greater level of accessibility, which in turn will lay a solid foundation for good usability.

“For the web, accessibility means that people with disabilities can perceive, understand, navigate, and interact with websites and tools and contribute equally without barriers”, according to W3C(World Wide Web Consortium). But a lot of us consider the “disability” mentioned in the definition as blind people using screen readers. However, said disabilities can include a wide variety of impairments.

- Visual impairment can also include color blindness, or limited vision, something which can easily happen as we grow older

- Disability categories also include temporary disabilities, such as a broken wrist that makes it difficult to use a mouse

- We can all suffer from disabilities at some point in our lives through events that are often unavoidable, such as sports injuries, minor operations, or everyday accidents.

All these points accentuate the need to create products that work for a more diverse set of people. A notable approach to incorporating accessibility into the design process is through “inclusive design” (also known as “universal design”, or “design for all”)

Making the Web More Accessible Using Machine Learning

Machine learning now drives a huge number of day-to-day interactions on the web. We’re encountering machine learning (ML) algorithms that help provide a richer and more personalized user experience, whenever we are using most of the social media sites. Machine learning is being used to improve general consumer experiences all the time, but it could be doing more to improve specific experiences.

There are countless opportunities for applying machine learning to current web accessibility challenges. And beyond the application of pre-trained algorithms, machine learning holds promise for accessibility through the application of entirely new types of algorithms. But there’s a problem: a lack of well-annotated data

Challenges surrounding the quality and quantity of data available

Unlike a large company like Google, which has a great deal of data to work with, smaller companies may not have a dataset of a billion web pages whose semantics are well-annotated.

So we may not be able to take a rather naïve data model approach where we have arbitrary input and transformation comes out. The challenge would be to identify the types of things we can do to solve sub-problems within the space or help build that data or at least warn about issues without necessarily being able to fix them.

Existing Tools That Could Potentially Help With Accessibility Challenges

Image captioning

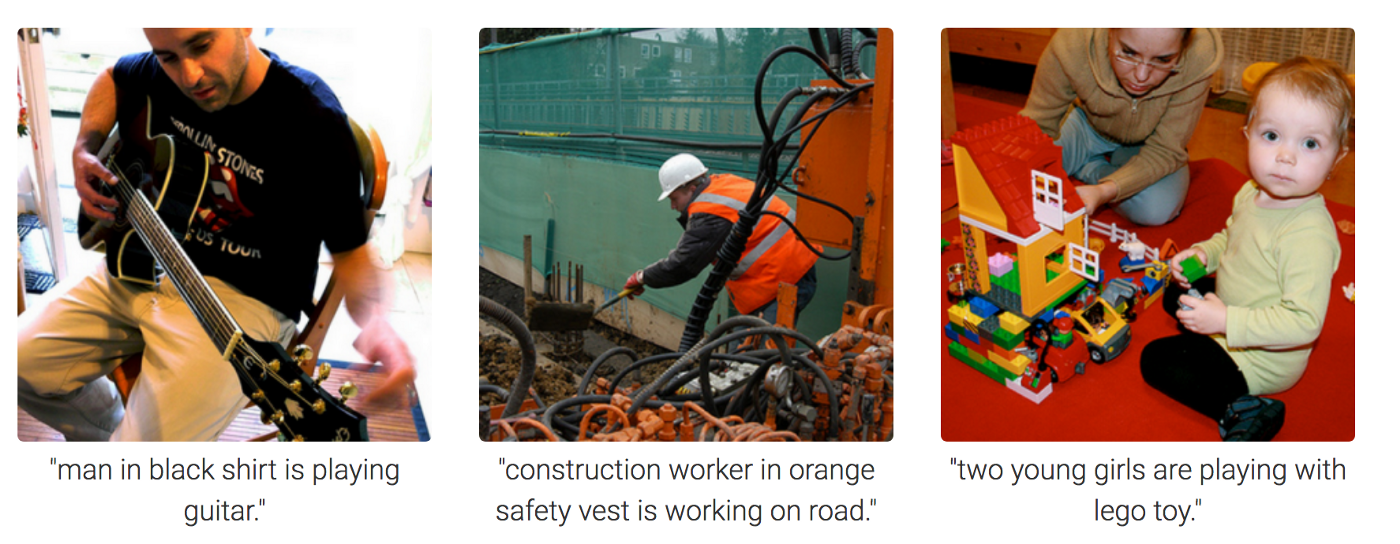

Image descriptions created by AI have come a long way. Image recognition techniques are used to give descriptions of various components inside an image, such as “two cats” or “body of water.” The descriptors improved as the algorithms improved: “two orange cats play with yarn.” Even more thorough descriptions, however, are often ineffective without context. However, this could be changing.

Facebook, for example, is now starting to contextualize image descriptions by whatever means they can, including user preferences, recent conversations, and events related to the user. They’re calling these machine-generated image descriptions alternative text.

Facebook, for example, is now starting to contextualize image descriptions by whatever means they can, including user preferences, recent conversations, and events related to the user. They’re calling these machine-generated image descriptions alternative text.

Several accessibility specialists disagree with this terminology, claiming that a machine can never accurately predict a content author’s goal. They unanimously agree, however, that a computer-generated description is preferable than no description. And, when combined with automated accessibility testing, captioning tools like these can help us generate alternative text for images or function as a fallback for assistive technology when there is no alt text available.

Every automated accessibility testing tool on the market today checks whether or not a picture has alternate text. They could do the same test in parallel with picture recognition by integrating with image recognition technology, allowing us to do a couple of different, very powerful things:

- Provide suggestions for alt text in the generated report

- Compare those suggestions to any pre-existing alt text and flag it if the accuracy of the alt text to the image seems low (what’s known as a low “certainty equivalent”)

When picture recognition is combined with a content summary technique, machine learning can eventually provide context to images on a page, allowing for a better estimate of the author’s intent. Combining existing algorithms with assistive technology definitely has a lot of potentials. The accuracy of image descriptions will improve as the algorithms develop. It will be utilized in automated accessibility testing to make the time-consuming task of verifying alt text accuracy and manual remediation faster and easier.

AI Simulation of User Navigation

Artificial intelligence (AI) is frequently utilized in video games to emulate human interaction. This method frequently collects screenshots or videos and then applies machine learning to classify what is happening in the screenshots while the AI conducts activities. Because it contains numerous layers of input, this type of Machine Learning is known as Deep Learning. Aspects of this method could be adapted for automated accessibility testing.

There are some accessibility flaws that are difficult to detect with automated methods, notably in the domain of keyboard accessibility. Consider the following scenario: if a modal element opens and gains visual focus but not programmatic focus, a keyboard-only user will be unable to interact with the modal or close it. A keyboard trap is a common occurrence.

Now consider using AI to navigate through online pages using only the keyboard, with screenshots providing feedback on what is happening on the screen. The system could tell if it couldn’t navigate any longer, if navigation was exceedingly inefficient, or if it could navigate but nothing was happening on screen (as would be the case, for example, when a user is able to navigate through a hamburger menu even though the menu is collapsed). An AI-based observation of the homepage, such as the one described, could assist in detecting the issue.

Content Simplification

Content simplification has the ability to make content more accessible to people with cognitive and learning difficulties by simplifying, reorganising, and representing it in new ways. There are already technologies that do this, such as IBM’s Text Clarifier, which can simplify any piece of content, but there’s still more that can be done.

Machine learning can synthesize the entire material for you, breaking it down into its key points, which is beneficial not only to those with impairments but also to anyone who wants to read the five-sentence summary of a 1,200-word essay.

This technique has a wide range of applications, and it once again demonstrates how a more accessible web experience for some users is a better web experience for all users. Content simplification could be paired with streamlined navigation and incorporated into scenarios where the user chooses to read the summary text before — or instead of — any block of content when used in conjunction with other tools.

Conclusion

Artificial intelligence and machine learning advancements offer enormous potential in the field of accessibility. Of course, there is still much more research to be done, but the prospects are exciting and should be pursued.

Some of the methods we’ve mentioned will be successful only if they can overcome the challenge of obtaining user data. Other solutions, such as combining existing machine learning tools with accessibility evaluators, don’t require user data and may be used immediately.

The ideas and solutions we’ve covered will never be able to completely replace the requirement for real people to design accessibility standards, perform manual testing, and teach developers and content editors how to build accessible and semantic code. Accessibility is becoming more and more ingrained in the lexicon of web development, and this trend must continue. However, even flawed solutions that help us get closer to a fully accessible online are worthwhile investments. The advantages are obvious for employees in various roles, from accessibility testers to developers, project managers, and, finally, end-users.

Looking to implement the latest technologies that can benefit the world? We at Dexlock holds significant expertise that can be just what you are looking for. Through our skills we deliver only the finest to fellow futurist thinkers who wants to make an impact in tech world. Connect with us here to give wings to your dreams.

Disclaimer: The opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Dexlock.