Let's Discuss

Enquire NowMinerva, the latest addition to Google’s myriad of AI solutions, can solve math problems with 50.3% accuracy; a whooping 43.4% increase from the average of 6.9% produced by other state-of-the-art models in the industry.

Think it can help you with your math homework? Let’s discuss it!

What is Google Minerva?

Launched toward the end of June 2022, Google Minerva is a language model, based on the Pathways Language Model (PaLM), that can handle complex mathematical and scientific compositions. This kind of qualitative reasoning query is where language models still fall short of human intelligence as it requires a combination of skills such as comprehension, reasoning and aptitude. Google’s Minerva, on the other hand, has the ability to resolve mathematical problems using sequential reasoning. Minerva resolves issues by providing solutions that incorporate numerical computations and symbolic manipulation, without any external tools. The model makes use of a combination of natural language and mathematical notations with which it parses and responds to mathematical queries.

Source: https://ai.googleblog.com/2022/06/minerva-solving-quantitative-reasoning.html

The Minerva Mechanism

Built using PaLM, Minerva has been trained on a 118GB dataset of scientific papers from sources including the arXiv preprint server and web pages with mathematical expressions using LaTeX, MathJax, or other mathematical typesetting formats. The data used by this model is such that the standard Text Cleaning procedures might remove necessary information, such as equations or symbols.The language model in Minerva, on the other hand, takes the equations and converts them into texts, deliberately keeping the equations intact. To improve performance and attain cutting-edge performance on STEM reasoning tasks, Minerva was built on four main strategies :

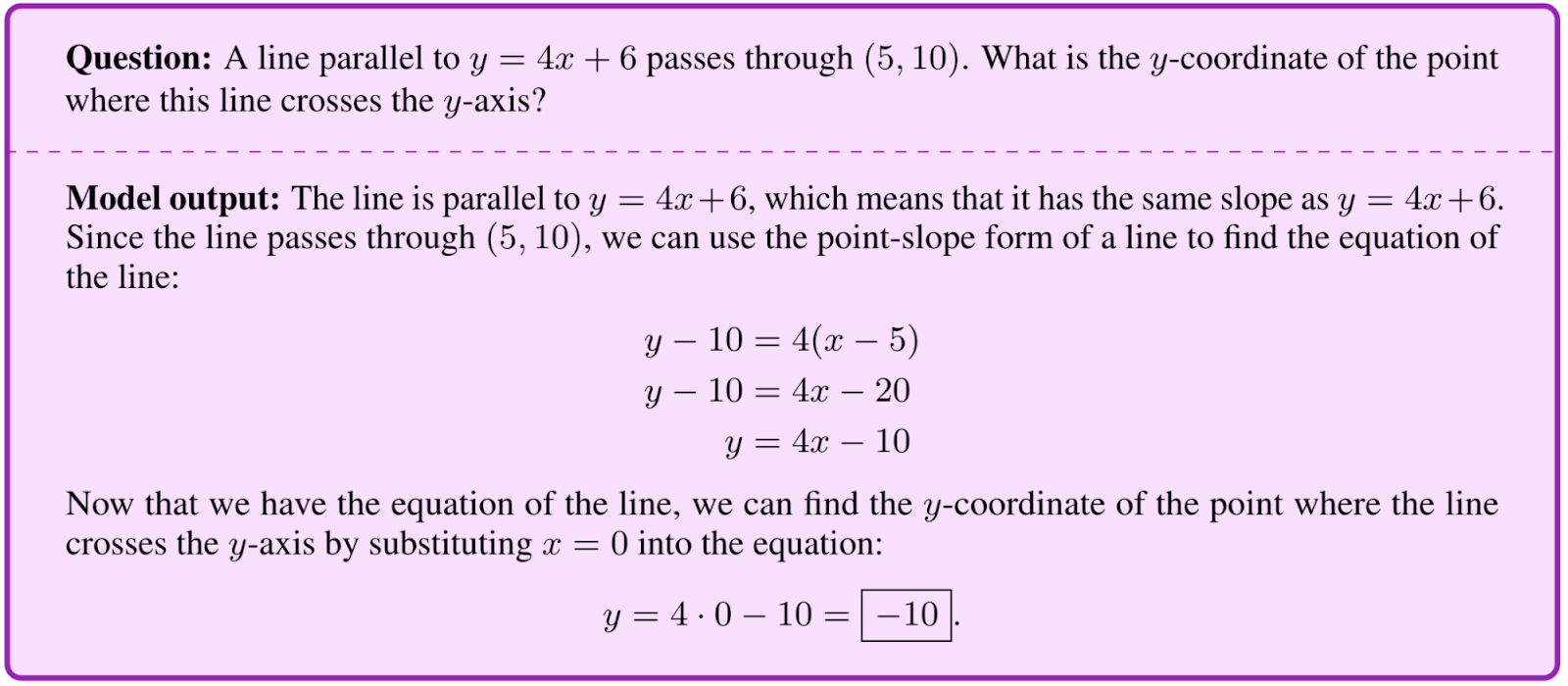

- Few-shot prompting: The capacity to learn tasks with fewer examples.

- Chain of Thought and Scratchpad prompting: Multiple questions with detailed answers are used as prompts for the model to generate intermediate reasoning steps.

- Majority Voting: Like most language models, Minerva assigns probability to several potential outcomes; as a result, when answering a query, the model generates multiple solutions rather than just one. Then, Minerva performs majority voting to select the most popular outcome as the final answer for these solutions.

What are its limitations?

Like all language models, Google’s Minerva also comes with its fair share of limitations.

- Minerva only gets math problems right 50% of the time. However, the majority of errors Minerva makes are simple to understand. Half of those are computational eros while others include reasoning errors, where the steps to the solution do not make sense together. Additionally, it is not so grounded in formal mathematical solutions.

- Answers by the model can turn out to be false positives. The model may occasionally produce an answer where the final result is accurate but the intermediate steps are inaccurate.

Considering the fact that Minerva was able to achieve impeccable accuracy on math three years prior to what forecasters had originally predicted, it is safe to say that things will only get better from here. Models like Minerva that can perform quantitative reasoning have a variety of use cases such as, assisting research and aiding students with additional learning opportunities. The Minerva language model is still young and holds a plethora of possibilities ahead of it.

Like Google, we at Dexlock strive to maintain substantial expertise in the latest technologies in the market. Through our expertise in AI, ML, Big Data, Data science and other related fields, we offer the best of services to other futurist thinkers looking to make a difference. Have a project like Minerva in mind? Connect with us here to turn your dreams into a reality.

Disclaimer: The opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Dexlock.